Abstract

This thesis is concerned with the analysis of the digital music signal for the extraction of meaningful information about the tonal content of the audio excerpt.

This work lies in the filed of Music Information Retrieval which is a science that has the goal of extracting high level, human-readable information from a musical composition. In this work we cover the retrieval of the tonal content at different levels of abstraction.

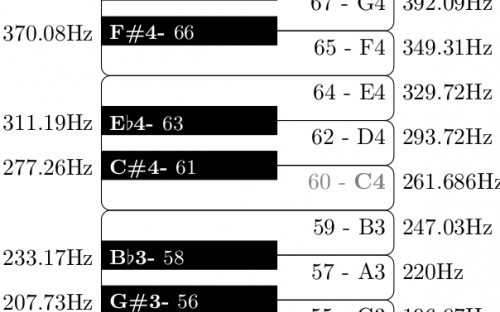

First, we present a method for the estimation of the presence of short-term stationary sinusoidal components, with a precise frequency resolution. The sinusoidal components are the main atoms that compose a musical note or a musical chord, and thus the tonal/harmonic information.

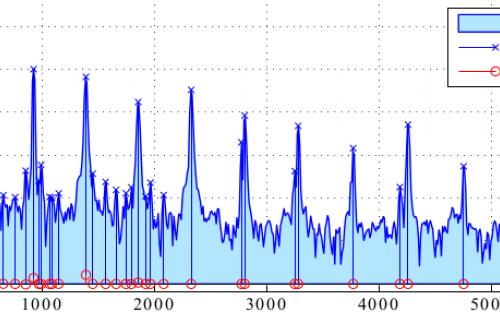

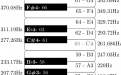

Next, we show an exhaustive comparative analysis of different spectral peak based tuning frequency estimation algorithms. The tuning frequency is usually set to the widely accepted standard, that is, of 440 Hz. However, several musical pieces exhibit a slight deviation in the tuning requency. Therefore, a reliable reference frequency estimation method is fundamental in order to not deteriorate the performances of the systems that use this information.

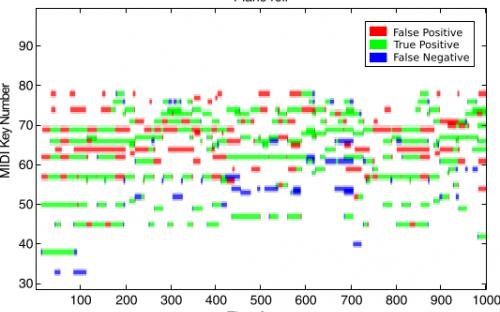

Then, we present a novel system to measure the salience of a given sinusoidal component in a mixture of partials generated in a polyphonic composition. This measure can be used as a front-end for an automatic music transcription system.

Then we show how to use the harmonic mid-level representation called Pitch Class Profile for detecting the musical chord boundaries in a song.

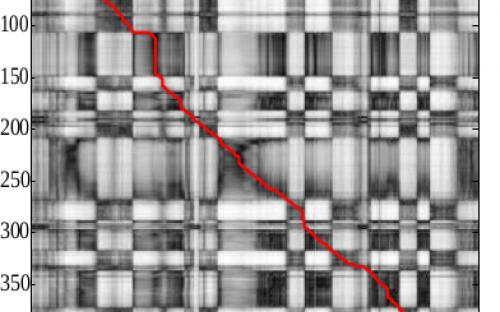

Finally, we deal with the task of cover song identification (identify different rendition of a given song). We propose an automatic method to combine the results of several different systems in order to improve the detection accuracy of a cover song identification algorithm.